When I first started working at Infosys' Innovation Hub in Palo Alto, CA I was introduced to the concept of Seeing Spaces through a video by Bret Victor, an HCI Research Specialist. With no other background or resources, I was asked to build upon Bret's work and imagine it's applicability in reducing the product design timeline for enterprise.

I originally thought this was an extremely broad request and that the gap between Bret's concepts and adoption as an industry standard was enormous. I struggled with what my output should be for this task. Initially, animated videos were how I showcased Seeing Space concepts for industrial assets (aircraft and robots). I learned the basics of Cinema 4D to produce the videos and quickly learned of the computational intensity of rendering full sequences - The final versions of my videos took about 20 hours each to render with a 6-Core Mac Pro.

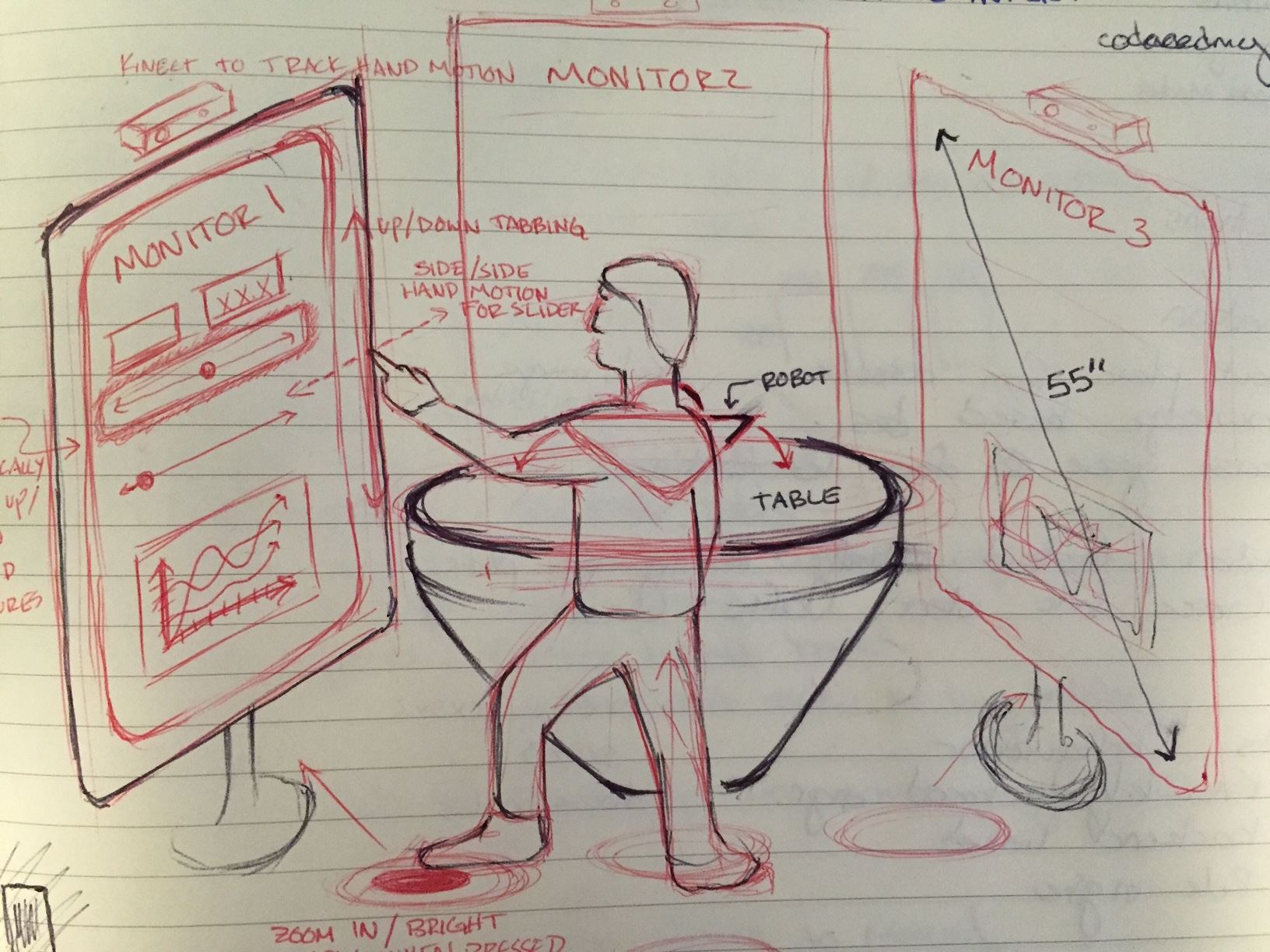

The videos helped facilitate discussion around this topic with clients, but my manager, Sanjay, suggested I expand to a working physical prototype. Having just joined a technical services company, I had assumed the majority of our work would be screen based. It was extremely exciting to realize this was not the case. A teammate and I researched 3D printers, sourced and purchased one. I sketched designs for a basic example of a complex product, having mechanical components, software, and embedded sensors, that would be the center piece of my Seeing Space. Toys served as the best sources of inspiration.

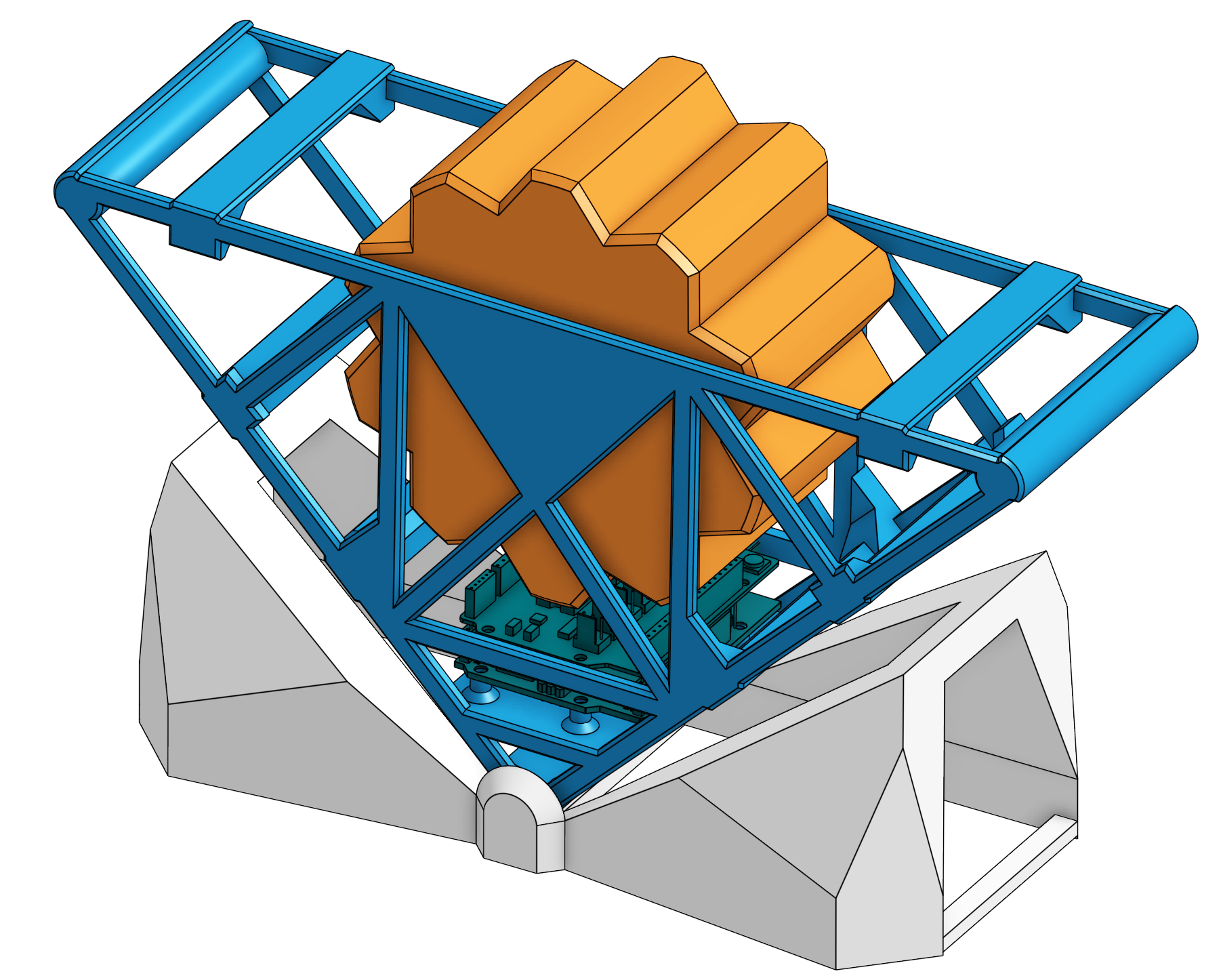

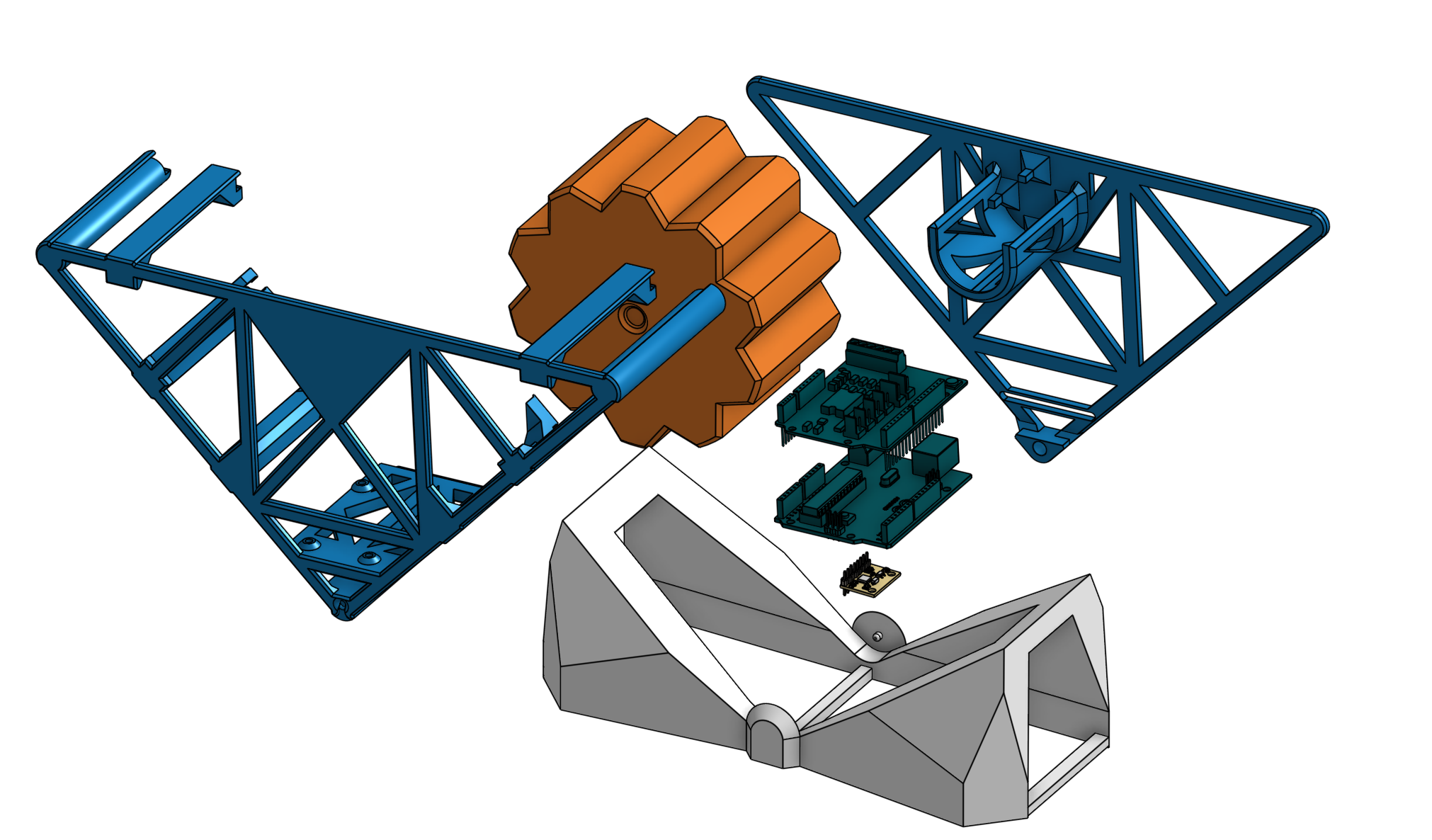

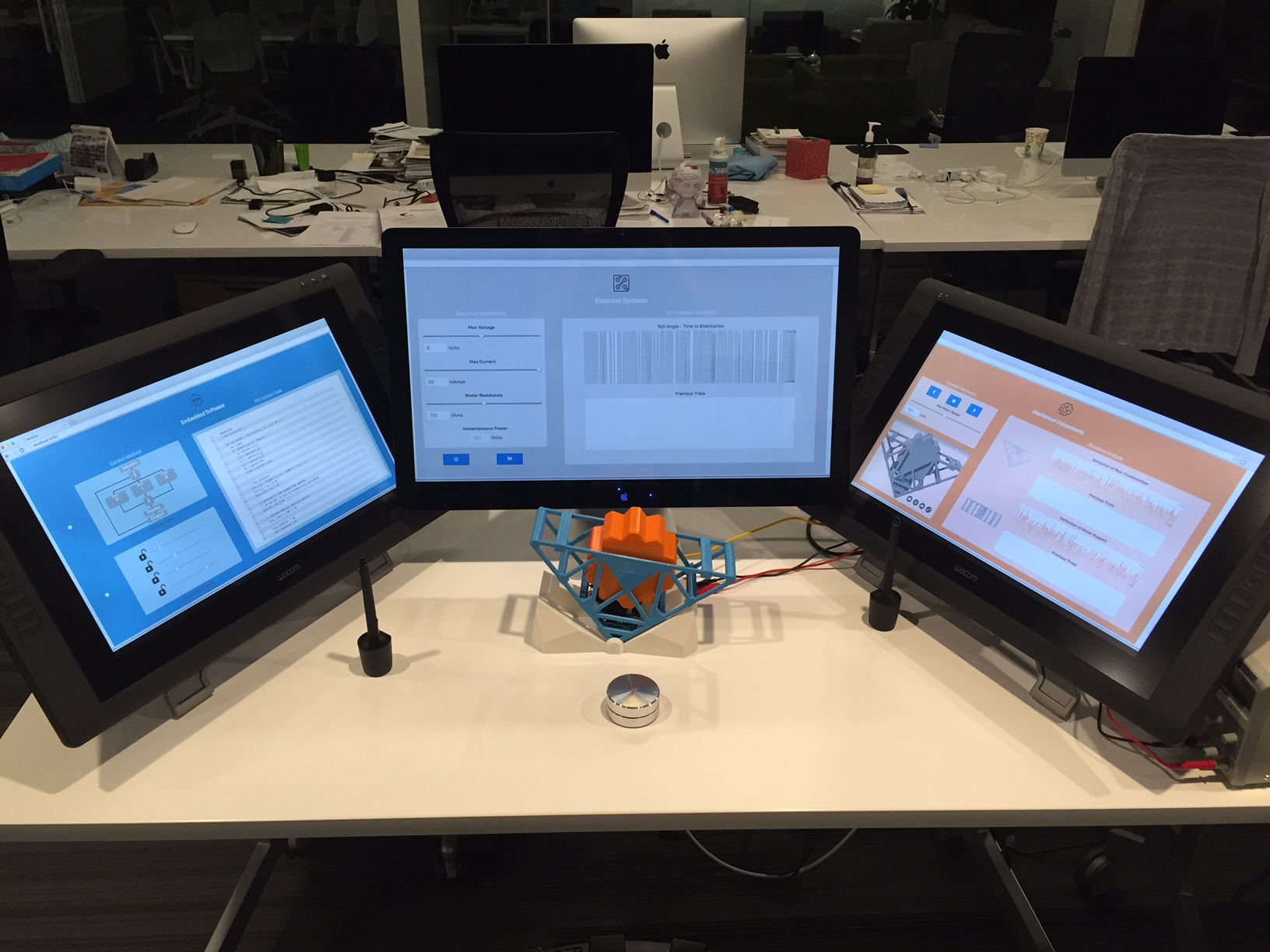

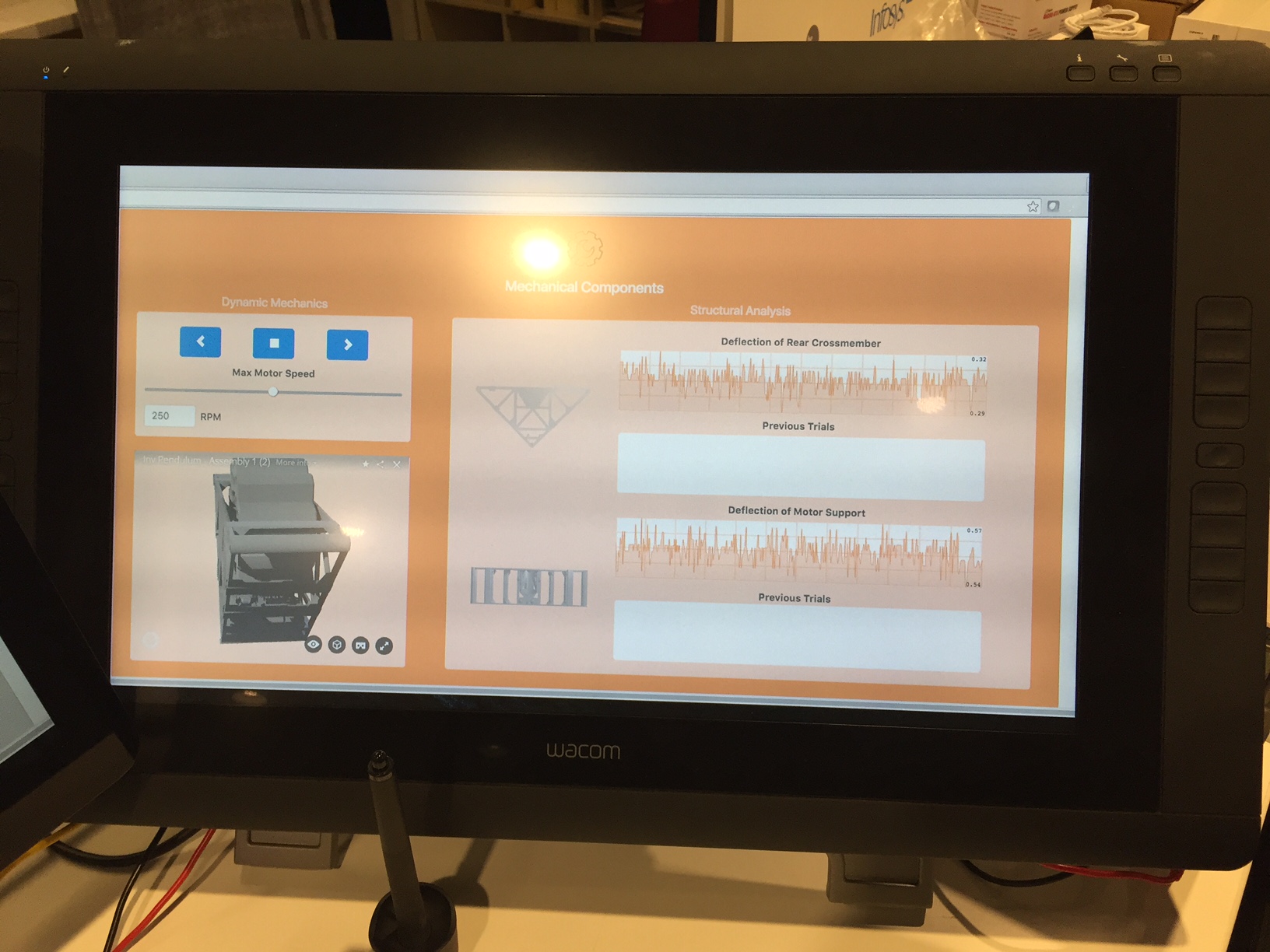

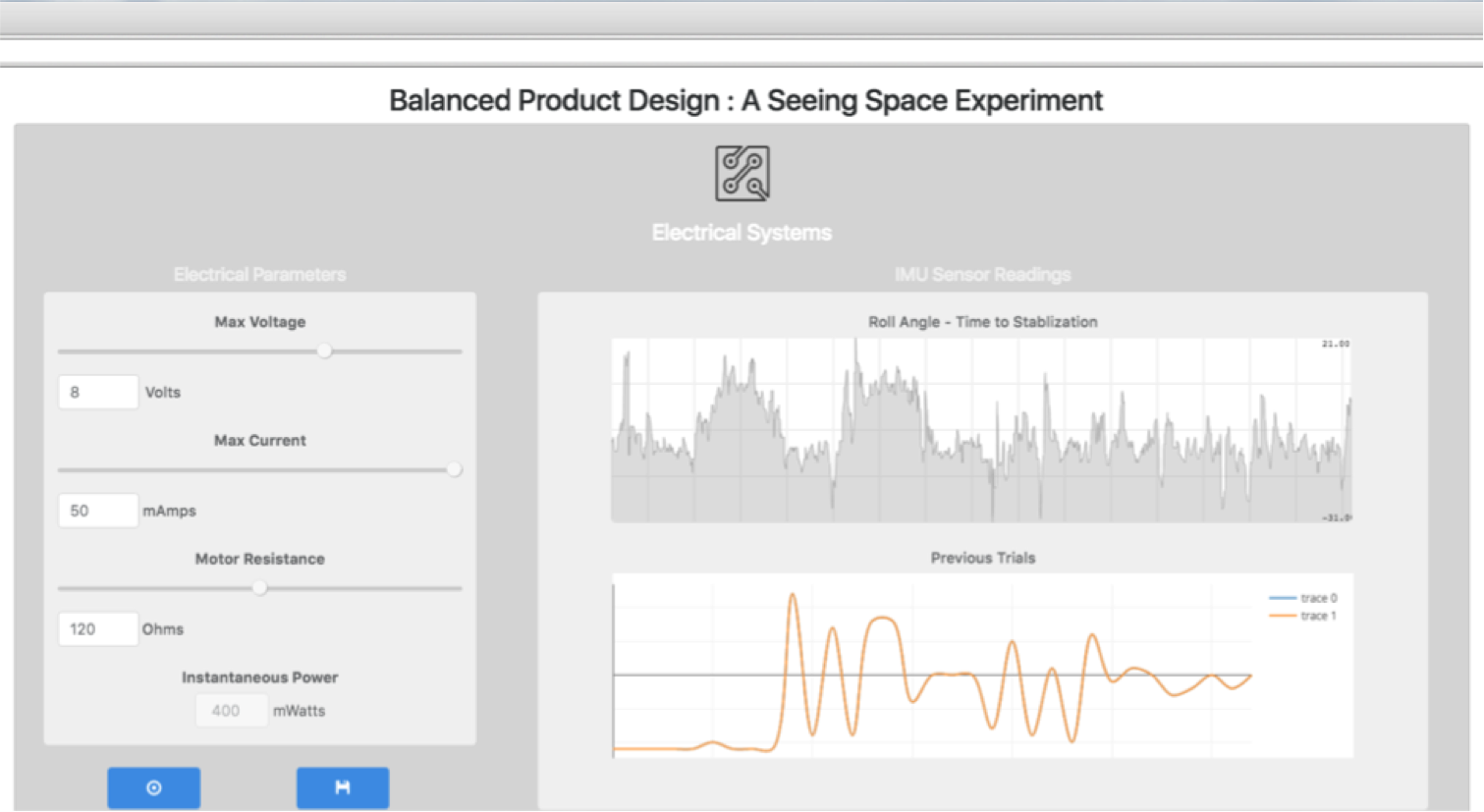

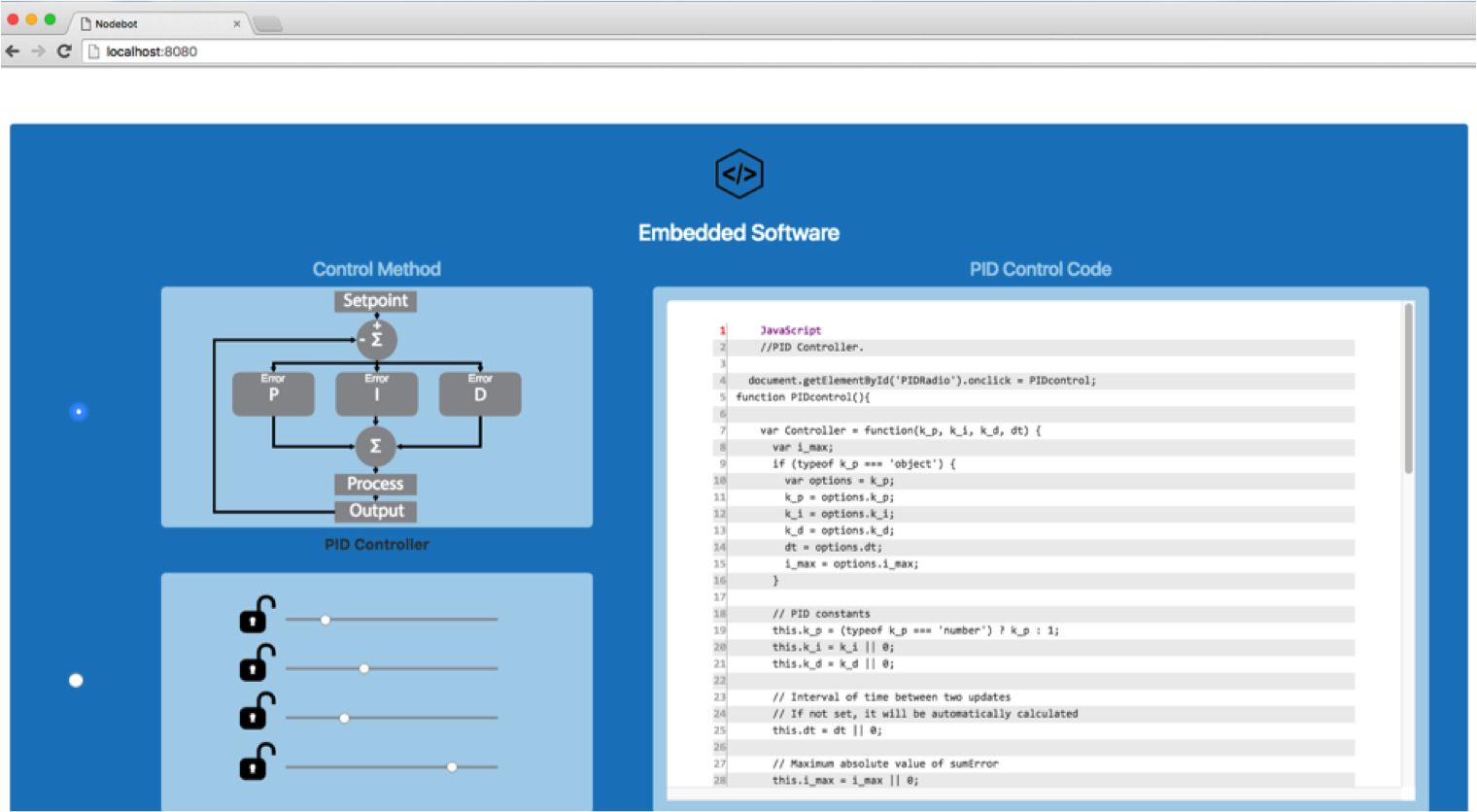

On the left are a few examples of an inverted pendulum. These balance by transferring angular momentum from the spinning reaction wheel to the frame, with help from onboard sensors. Over several iterations, I designed and printed the frame, wheel, and base. The sensors onboard include an Inertial Measurement Unit (IMU) to record position and position rate of change, strain gauges to measure stress on the plastic frame, and an optical speed sensor to detect the speed and direction of the reaction wheel. An Arduino collected and streamed this data to a locally running server that displayed the results on a webpage. The webpage took multimodal input via knobs, foot pedals and a stylus to make adjusts to the pendulum. These were rudimentary illustrations of how input could be received in a way that wouldn't distract the user from focus on their objective - balancing the robot. The user could adjust parameters like the type of control method used or the voltage supplied to the main DC motor, or modify code running on the arduino and juxtapose the results (physically and digitally) in near real-time.

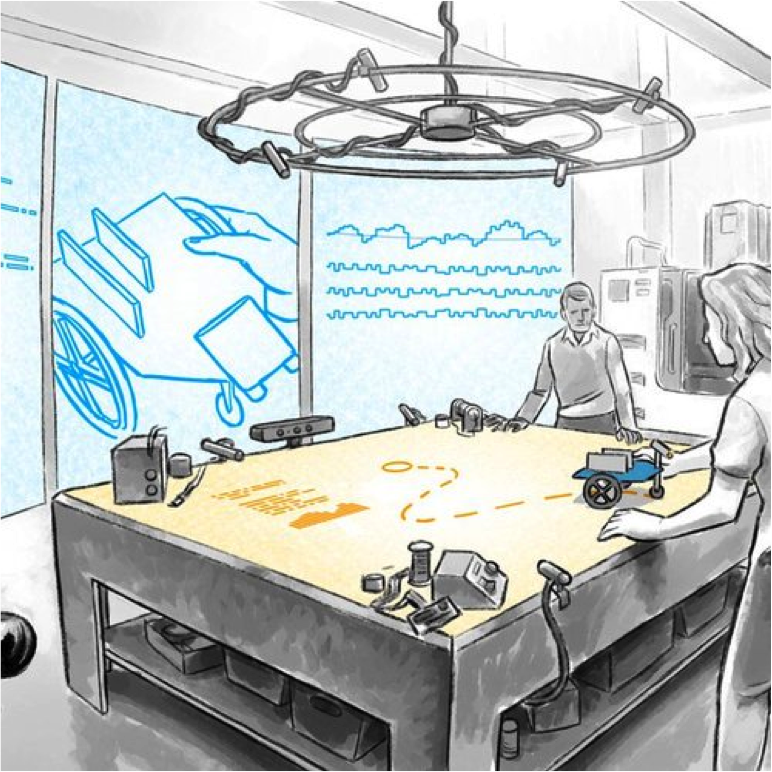

I presented this physical demonstration of a Seeing Space at the 2016 CHI conference, to thought leaders like Alan Kay, and to executives from Kuka, Cisco, and Toyota. Discussions led to exploring how to better enable people to visualize and make sense of large data sets. I created a VR Seeing Space to try and address this for the IT department of partner client.

Through developing and presenting these demos, I noticed the validity of concepts seemed to increase as the display evolved from a presentation, to a contextualized video, to a physical experience. It was exciting to contribute to the work being done in different communities to progress this vision and seeing the receptiveness of forward thinking companies to experiment with it.

Seeing Space: Room-Scale VR

This two day design sprint aimed to explore how VR could help with visual pattern discovery in large datasets. The user is able to visualize 15,000 lines of code while retaining the ability to zoom into a single line for editing. Additionally, the user can see how and where their manipulations of code are reflected in the business process and infrastructure. Viewing the code zoomed out created a unique experience, allowing the user's focus to extend to the edge of their peripherals. The headset proved to be difficult to wear for extended periods of time, given the resolution and "screen door" effect that would occur at certain zoom levels.

Gallery

CAD model of the inverted pendulum with blue truss frame, orange reaction wheel, and grey base

Exploded view showing frame halves, reaction wheel, electronics mount, and base assembly

Initial sketch of the Seeing Space concept: user surrounded by monitors, robot on table, Kinect tracking

Concept illustration of a Seeing Space with projected data, sensors, and collaborative design

Wireframe for the Seeing Space web interface: control strategy, electrical parameters, and mechanical components

Balanced Product Design web app with embedded software, electrical systems, and mechanical components panels

Infosys Seeing Space marketing banner detailing subsystem interactions, input modalities, and real-time monitoring

Multimodal input: stylus, foot pedal, and rotary knob for hands-free parameter adjustment

First pendulum iteration: grey 3D-printed frame with DC motor and Arduino wiring

Early prototype with Arduino mounted on grey triangular base

Blue and orange pendulum on desk at the Infosys Innovation Hub in Palo Alto

Close-up of strain gauge amplifier boards wired to the pendulum frame

Strain gauge mounted on the 3D-printed frame to measure structural stress

Detail of a strain gauge bonded to the blue truss frame

Macro view of a strain gauge at the pendulum frame joint

Three-monitor Seeing Space setup: embedded software, electrical systems, and mechanical components

Seeing Space demo at an executive presentation with three Wacom displays

.jpg)

Rotary knob used for hands-free parameter adjustment during balancing

Web app electrical systems panel: voltage, current, resistance controls and roll angle data

Web app embedded software panel: control method selection and PID code editor

Web app mechanical components panel: 3D model viewer and structural analysis data

3D printer bed with early reaction wheel prototype

3D-printed reaction wheel with pennies for weight on the printer bed

Close-up of 3D-printed hinge joint on the blue pendulum frame

User research: pain points in current product design workflows

Observed areas for improvement: 3D modeling in 3D, real-time simulation, adaptive tools

The Infosys Innovation Hub workspace in Palo Alto with post-it covered walls

15,000 lines of code visualized in room-scale VR for pattern discovery

Full Balanced Product Design web application with all three subsystem panels active