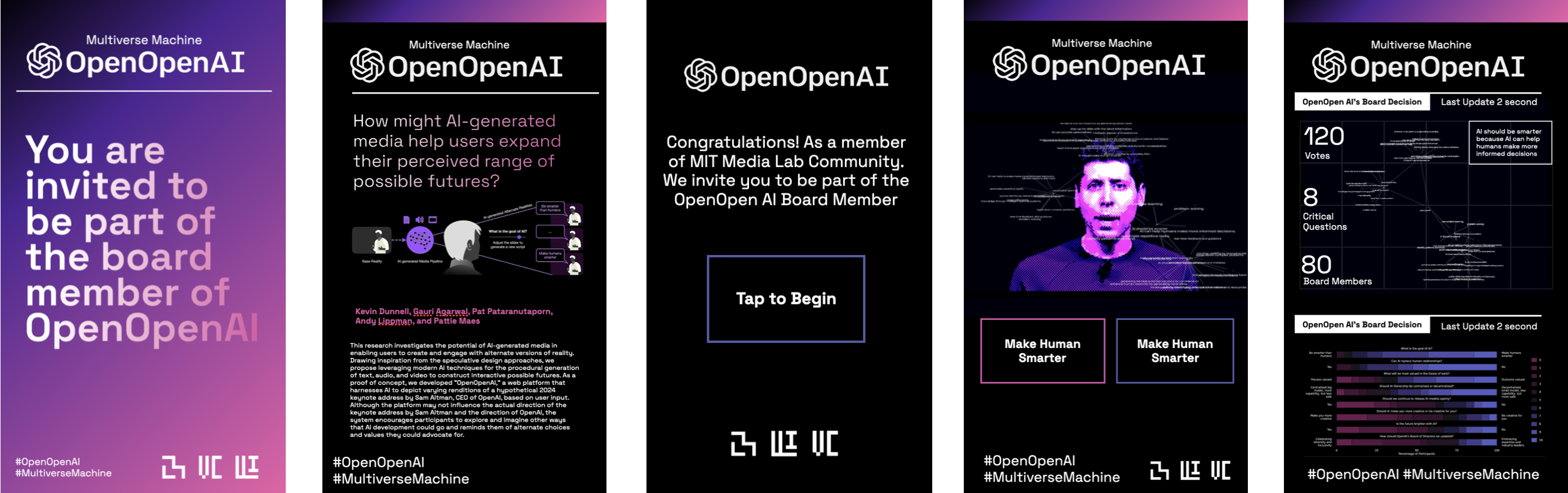

The capacity to influence the trajectory of AI is concentrated among a very small number of people. The rest of us are positioned as consumers of whatever future they decide to build. We chose the name "OpenOpenAI" to reflect on the paradox of a company whose mission was to democratize AI for humanity while pursuing a closed-source approach. The question we wanted to ask was simple: can we use the very technology under scrutiny, AI itself, to open up public participation in shaping its direction?

.png)

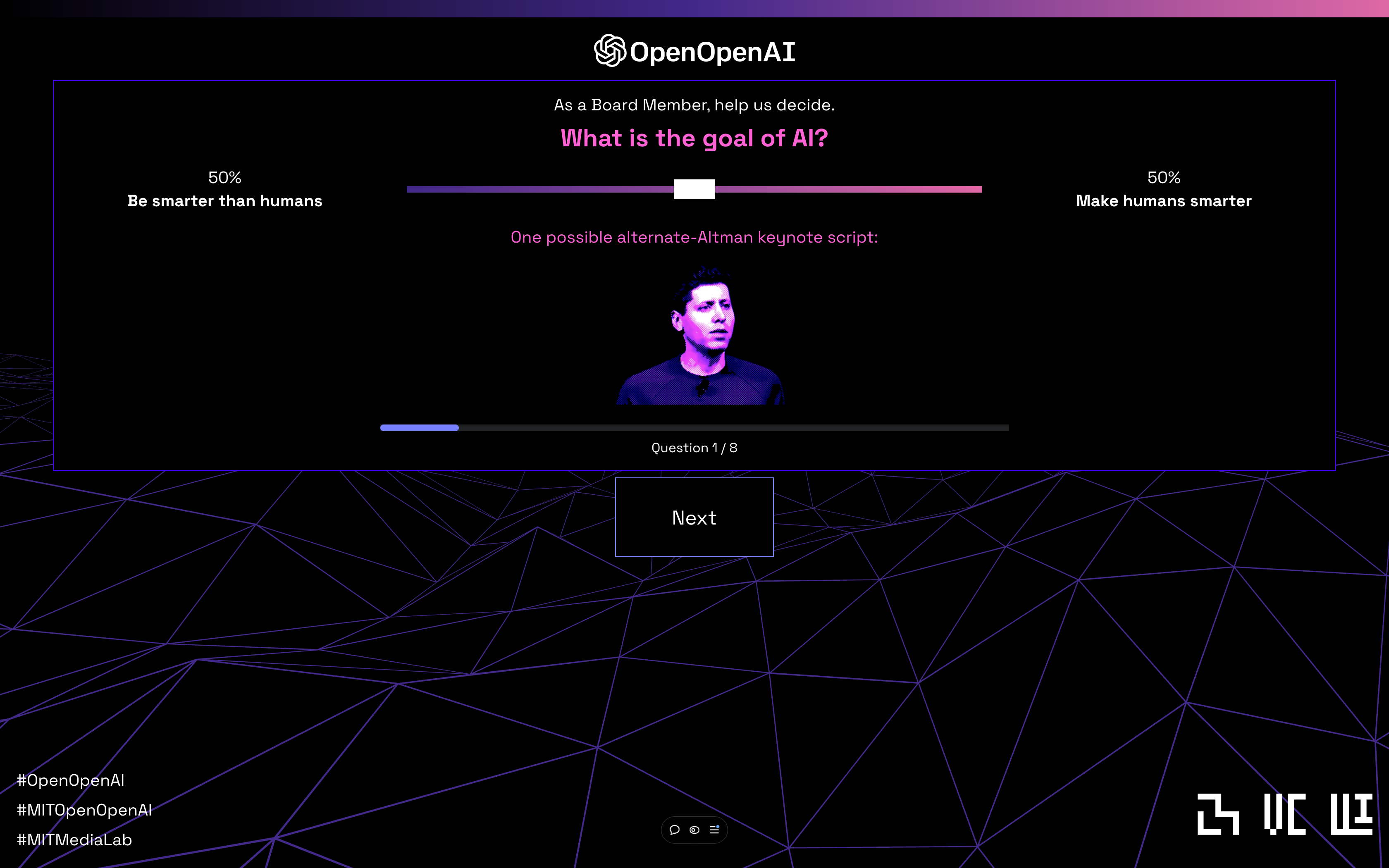

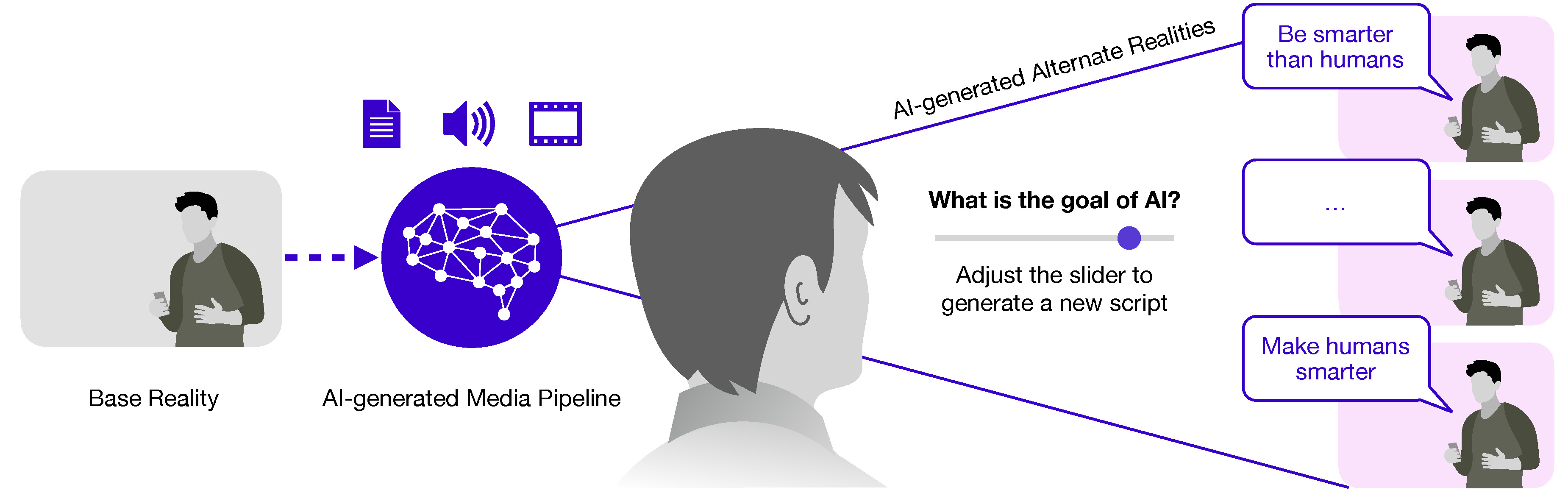

The project is a web platform that generates alternate versions of a hypothetical 2024 OpenAI keynote address by Sam Altman. We chose OpenAI's prominence deliberately: it is a topic most people have already formed opinions about, which makes it a productive ground for reflection. The interface presents eight question cards covering topics like the goal of AI, the future of work, AI ownership, creativity, and OpenAI's board composition. Each question uses a range slider rather than a binary choice, because these issues exist on a spectrum. As users adjust the sliders, the system dynamically generates a unique narrative script at the intersection of their positions.

The technical pipeline chains together several generative models. GPT-4 blends divergent narrative scripts conditioned on user-specified percentages. The synthesized text is fed to PlayHT's Parrot model for zero-shot voice cloning, producing audio in a cloned version of Altman's voice. That audio is then synchronized to video using Wav2Lip, a CNN-based lip-sync model, applied to frames from the real DevDay 2023 keynote. The result is a synthetic keynote video that looks and sounds plausible while presenting a future the user helped construct.

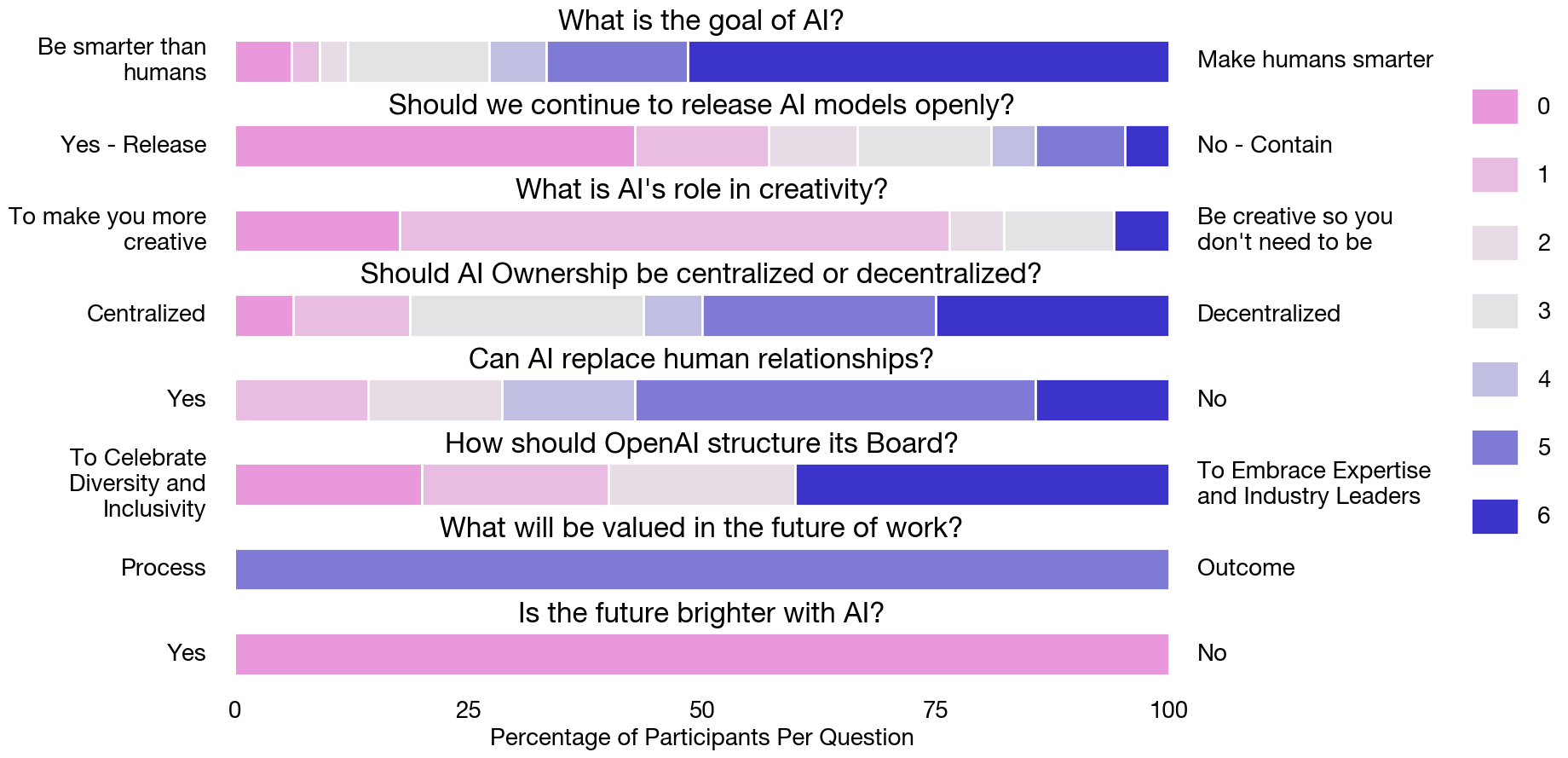

In a pilot study with 30 participants, the platform showed strong results for opening minds to new perspectives and fostering a sense of engagement and control. Users valued the personalization, the ability to see opposing viewpoints without having to seek them out, and the way the experience made abstract AI governance questions feel concrete and immediate. Some found the deepfake element unsettling, which we consider a productive discomfort: it surfaces real concerns about synthetic media while demonstrating the technology's capacity for constructive use.

The broader motivation is that speculative design has historically been confined to museums and academic exhibitions. We think AI-generated media can make it participatory and scalable, giving ordinary people a way to explore and advocate for the futures they want rather than passively accepting the ones being built for them.

Presented at ACM CHI 2024

Gallery

OpenOpenAI presentation at ACM CHI 2024

Physical installation at MIT Media Lab with five standing displays

OpenOpenAI semantic map display showing multiple synthetic Altman keynote variations

Futures cone diagram: possible, plausible, probable, and preferred futures over time

Publications

- AI-Generated Media for Exploring Alternate Realities

May 2024 · ACM CHI (Conference on Human Factors in Computing Systems)

- OpenOpenAI and Alternate Altman: Democratizing the Future of AI through Speculative Participatory Media

December 2024 · NeurIPS (Conference on Neural Information Processing Systems)